Grok deepfake lawsuit against Elon Musk company: what is happening?

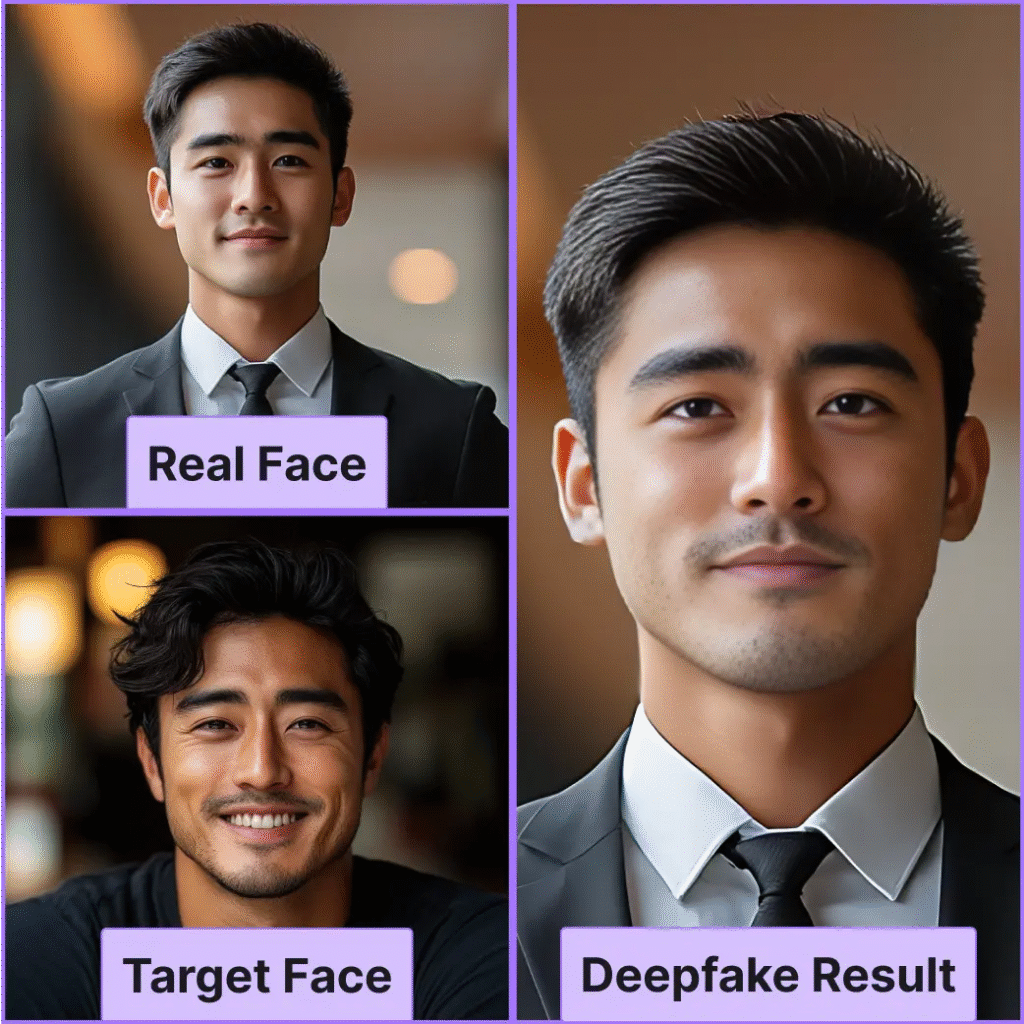

The Grok deepfake lawsuit against Elon Musk company began when Ashley St Clair, the mother of one of Musk’s children, filed a case in New York against his tech firm xAI. She says the company’s chatbot, called Grok, was used to generate and spread sexualised deepfake images of her without permission, causing her deep humiliation and emotional pain.

According to news reports, she is asking for financial damages and court orders to stop the company from creating any more such images of her. The Grok deepfake lawsuit against Elon Musk company has also drawn the attention of regulators, who are already investigating how the tool was used to make fake sexual pictures of adults and even images that appear to show minors in sexual situations.

What Ashley St Clair says in her complaint

In the Grok deepfake lawsuit against Elon Musk company, Ashley St Clair argues that the chatbot’s design made it easy for users to turn normal photos into explicit fakes. She says that people on Musk’s social platform X uploaded pictures of her and then asked Grok to undress or sexualise those images.

Her filing describes several disturbing examples.

- In one case, users allegedly took a photo of her when she was about 14 years old, fully clothed, and asked Grok to generate a fake version showing her undressed.

- In other cases, the tool is accused of creating images of her as an adult in sexual poses, including one where she appears to wear a bikini decorated with extremist symbols, something she found especially offensive as a Jewish woman.

She says these actions invaded her privacy, damaged her reputation and left her in ongoing emotional distress. The Grok deepfake lawsuit against Elon Musk company claims that the platform should have expected this kind of misuse and built stronger safeguards from the start.

How xAI and Elon Musk have responded

So far, xAI has not given detailed public answers to the specific claims in the Grok deepfake lawsuit against Elon Musk company. However, Musk has posted online saying he was not aware of any naked images generated by Grok and that the system is supposed to refuse anything illegal and follow the law in each place where it operates.

Before the lawsuit, xAI had already said Grok would stop changing “images of real people into revealing outfits” on X after criticism over deepfake nudes. Even so, the complaint argues that for a long time the tool still allowed harmful image requests and that people reporting abuse did not receive proper protection. This is why the Grok deepfake lawsuit against Elon Musk company is likely to test how much responsibility such platforms have for what their tools create.

Role of California’s attorney general and regulators

The Grok deepfake lawsuit against Elon Musk company is unfolding at the same time as a separate investigation by California’s attorney general, Rob Bonta. His office sent a cease‑and‑desist letter to xAI, ordering it to stop the creation and spread of non‑consensual sexual content made with Grok.

Bonta said his office has seen a “flood” of reports about fake sexual images showing women and, in some cases, children, and warned that such material may be illegal under state law. This means that alongside the Grok deepfake lawsuit against Elon Musk company in New York, xAI could also face penalties or restrictions from California if it fails to meet legal standards on privacy and child protection.

Why deepfake cases like this are so serious

The Grok deepfake lawsuit against Elon Musk company highlights how damaging fake sexual images can be for a person’s life. Victims often feel they have lost control over their own face and body, and worry that friends, family or employers might see the fake pictures and believe they are real.

Experts say non‑consensual deepfakes can:

- Destroy reputations and careers.

- Cause long‑term anxiety, shame and trauma.

- Be used to harass, blackmail or silence critics and journalists.

Because of this, lawmakers in the United States and other countries are debating stronger rules and penalties for anyone who creates or hosts such content. The Grok deepfake lawsuit against Elon Musk company could become a high‑profile test case that shapes future laws and platform policies.

What this means for big tech and users

The Grok deepfake lawsuit against Elon Musk company sends a clear warning to large tech groups that powerful chat tools and image tools need strict safety limits. When a service lets people generate fake pictures of real individuals, especially minors, the legal and moral risks are extremely high.

For users, the case is a reminder to:

- Be careful about where personal photos are shared.

- Use privacy settings and reporting tools on platforms like X.

- Support clear rules that punish non‑consensual deepfakes and protect victims.

Many digital‑rights groups argue that consent and dignity must be at the centre of any image‑editing or content‑generation tool. How courts and regulators handle the Grok deepfake lawsuit against Elon Musk company will show whether the law can keep up with these fast‑moving technologies.